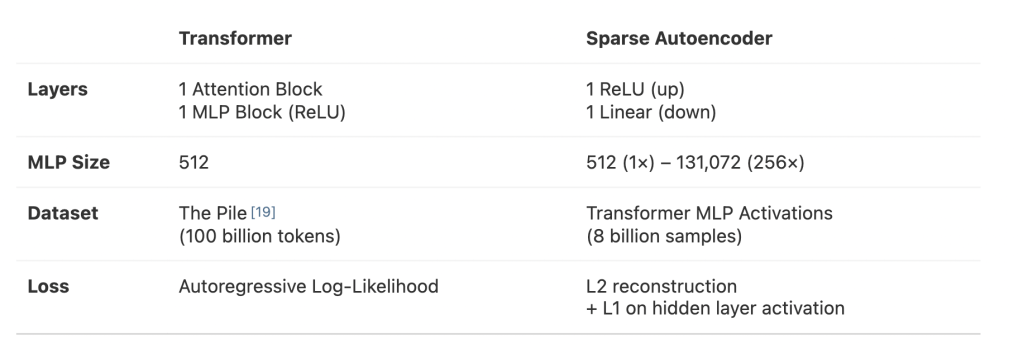

This is a cool paper from Anthropic. They trained a model to take the (hard-to-interpret) activations of a neural net and decompose them into distinct features. The goal of this approach is to build a set of features that are causally related to the original model and each uniquely correlate with some human-recognizable concept to make it easier to interpret what’s going on conceptually under the hood of the original model. If you could do that really well, you could help humans grok results that models find in black-box-y ways and might help ensure AI reliability by making how it understands and is executing on what it’s asked to do legible to humans.

And it seems like their results were really encouraging:

Sparse Autoencoders extract relatively monosemantic features. We provide four different lines of evidence: detailed investigations for a few features firing in specific contexts for which we can construct computational proxies, human analysis for a large random sample of features, automated interpretability analysis of activations for all the features learned by the autoencoder, and finally automated interpretability analysis of logit weights for all the features. Moreover, the last three analyses show that most learned features are interpretable. While we do not claim that our interpretations catch all aspects of features’ behaviors, by constructing metrics of interpretability consistently for features and neurons, we quantitatively show their relative interpretability.

Sparse autoencoders produce interpretable features that are effectively invisible in the neuron basis. We find features (e.g., one firing on Hebrew script) which are not active in any of the top dataset examples for any of the neurons.

Features connect in “finite-state automata”-like systems that implement complex behaviors. For example, we find features that work together to generate valid HTML.

In fact, they end up kind of calling their shot that they worked out a key piece of theory and now it’s just a matter of engineering to do real mechanistic interpretability:

it increasingly seems like a large chunk of the mechanistic interpretability agenda will now turn on succeeding at a difficult engineering and scaling problem, which frontier AI labs have significant expertise in.

Chris Olah put the point even more finely on Twitter:

If you’d asked me a year ago, superposition would have been by far the reason I was most worried that mechanistic interpretability would hit a dead end. I’m now very optimistic. I’d go as far as saying it’s now primarily an engineering problem — hard, but less fundamental risk.

Let’s see! It’s cool to see this field emerge in real-time, but, as far as I can tell, there haven’t been any really practical results yet. Regardless of how good a foundation sparse autoencoders prove to be, I’m excited to continue following what Anthropic and others are working on.

Leave a comment